A 4D spatiotemporal

vector database for

AI world models

LOCI is a middleware layer on top of Qdrant that makes spatiotemporal structure first-class through three primitives: multi-resolution Hilbert bucketing, temporal sharding, and predict-then-retrieve with novelty detection.

The problem

Existing vector databases have no concept of space or time

Modern world models — V-JEPA 2, DreamerV3, GAIA-1, UniSim — produce embeddings where every vector carries an implicit 4D address (x, y, z, t). Existing vector databases treat all dimensions equally.

A spatial query requires three independent float-range filters. Time-based retrieval has no native sharding. There is no primitive for "predict the future state, then retrieve what is spatially nearby."

Naive Qdrant

x_min ≤ x ≤ x_max "comment"># filter 1

y_min ≤ y ≤ y_max "comment"># filter 2

z_min ≤ z ≤ z_max "comment"># filter 3

"comment"># 3 independent range checks

"comment"># no temporal awareness

"comment"># no novelty detectionLOCI

hilbert_r4 ∈ {…} "comment"># single pre-filter

"comment"># covers all 3 spatial axes

"comment"># temporal sharding routes to epochs

"comment"># predict_and_retrieve + novelty scoreApplications

What becomes possible when AI systems can remember where and when

LOCI is infrastructure. The following are representative applications that require persistent 4D memory — systems that must recall not just what they observed, but the precise spatial location and time of each observation.

Real-Time Assistant for the Visually Impaired

An AI that doesn't just see — it remembers.

Today's assistive AI describes what it sees right now. But the world is continuous: a door that was open this morning may be closed tonight, a familiar route may be blocked. With LOCI, a wearable assistant can store every observation as a spatiotemporal memory — the exact position of a kerb, the timestamp of a bus arrival, the layout of a room visited last week. When the user asks "where did I leave my keys?", the assistant queries not just space but time, retrieving the last known position within seconds.

- Remembers where objects were last seen, not just what is visible now

- Tracks familiar routes over days and weeks, alerting to changes

- Answers time-based queries: "Was the café open yesterday morning?"

Self-Driving Cars & Autonomous Drones

A vehicle that knows what was here — not just what is here.

Autonomous vehicles and drones generate continuous sensor streams. Current systems process each frame in isolation. LOCI gives these systems a persistent 4D memory: a self-driving car can recall that a particular intersection floods after heavy rain, or that a school zone is busy every weekday between 8 and 9 am. A delivery drone can remember that a rooftop landing pad was clear last Tuesday but obstructed on Wednesday. Temporal context transforms reactive systems into anticipatory ones.

- Recalls time-of-day and day-of-week patterns in the environment

- Novelty detection flags situations that have never been encountered before

- Shared fleet memory: one vehicle's experience benefits the entire fleet

Contextual Smart-Glasses Assistant

Your glasses remember everything you've ever looked at.

Smart glasses that only process what they see right now are little more than a live camera. LOCI turns them into a persistent spatial notebook. Every glance is stored as a world state — position, timestamp, and a vector embedding of the scene. When you walk back into a room, the glasses can surface relevant memories: "You left your phone on this desk at 3 pm.", "You met this person at the conference last month.", "This machine was serviced two weeks ago."

- Surfaces memories when you re-enter a familiar location

- Tracks object history: where things were, when they moved

- Builds a personal spatial knowledge graph over months of use

Autonomous Drone Navigation & Mapping

Drones that build a living map of the world over time.

A drone that maps a construction site once has a snapshot. A drone powered by LOCI has a time-lapse. Each flight adds a new temporal layer to the spatial memory: which areas changed, where new obstacles appeared, how the site evolved over weeks. Multiple drones can share the same LOCI instance, pooling observations into a single coherent world model. When a drone returns to a previously mapped zone, it can instantly query what was there last time and detect what has changed.

- Multi-drone shared memory: one fleet, one world model

- Change detection: instantly identify what moved or appeared

- Temporal mission replay: reconstruct any past flight from stored states

Time-Based Memory for Robots

A robot that learns from its own history, not just its sensors.

Most robots operate in a perpetual present — they process what their sensors report right now, with no memory of what they did an hour ago. LOCI gives robots an episodic memory: every action, every observation, every interaction is stored as a spatiotemporal world state. A warehouse robot can recall that a particular shelf was restocked at 6 am every Tuesday. A surgical assistant can retrieve the exact sequence of steps performed in a previous procedure. This is the difference between a tool that reacts and an agent that accumulates experience.

- Episodic memory: recall specific past events, not just learned patterns

- Causal chains: understand what sequence of events led to an outcome

- Continual learning: improve from experience without retraining

Multi-Resolution Hilbert Bucketing

Encode (x, y, z, t) at multiple Hilbert resolutions (p=4, 8, 12). Spatial bounding-box queries use a Hilbert integer pre-filter with overlap, then apply an exact payload post-filter as the authoritative geometric check. Dense regions are promoted to finer resolutions at query time with adaptive=True.

"kw">from loci "kw">import LociClient, WorldState

client = LociClient(

"http://localhost:6333",

vector_size=512,

epoch_size_ms=5000,

)

"comment"># Spatial query — single Hilbert pre-filter

results = client.query(

vector=query_embedding,

spatial_bounds={

"x_min": 0.2, "x_max": 0.8,

"y_min": 0.0, "y_max": 1.0,

"z_min": 0.0, "z_max": 1.0,

},

overlap_factor=1.2,

)

Temporal Sharding

Automatic routing of vectors to time-partitioned Qdrant collections (loci_{epoch_id}). Configurable epoch size. Queries fan out only to epochs that overlap the requested time window — with the async client, all shards are searched concurrently via asyncio.gather.

"kw">from loci "kw">import AsyncLociClient

"kw">async "kw">with AsyncLociClient(

"http://localhost:6333",

vector_size=512,

epoch_size_ms=5000,

) "kw">as client:

"comment"># Concurrent fan-out across time shards

results = "kw">await client.query(

vector=query_embedding,

time_window_ms=(start_ms, end_ms),

limit=10,

)

trajectory = "kw">await client.get_trajectory(

state_id, steps_back=20, steps_forward=20

)

Predict-then-Retrieve

An atomic API call that composes a user-supplied world model with vector search, returning both results and a novelty score. 0.0 means the predicted state has been seen before; 1.0 means it is new territory. Enables episodic memory and surprise-driven exploration.

result = client.predict_and_retrieve(

context_vector=current_embedding,

predictor_fn=my_world_model,

future_horizon_ms=2000,

current_position=(0.5, 0.3, 0.8),

)

print(f"Novelty: {result.prediction_novelty:.2f}")

"comment"># 0.0 = predicted state has been seen before

"comment"># 1.0 = new territory

context = client.get_causal_context(

state_id, window_ms=5000

)

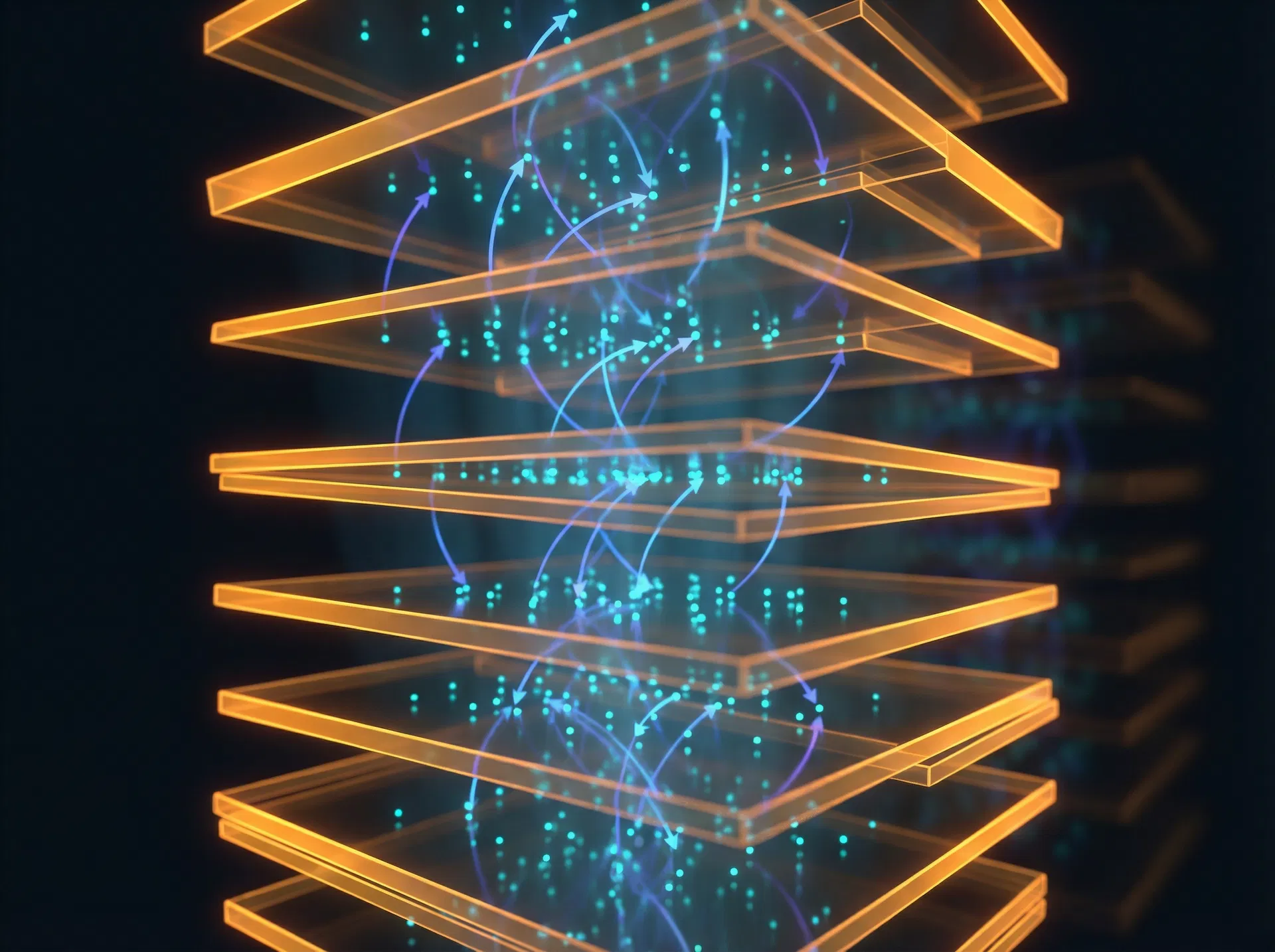

Architecture

Layered by design

Each layer has a single responsibility. Swap the storage backend, add a new adapter, or extend the retrieval layer without touching the rest.

Application Layer

LociClient · AsyncLociClient · LocalLociClient

insert · query · predict_and_retrieve

Retrieval Layer

predict.py · funnel.py

predict-then-retrieve + novelty · multi-scale coarse→fine search

Indexing & Routing

spatial/ · temporal/

multi-res Hilbert + overlap · epoch sharding + decay scoring

Adapters Layer

V-JEPA 2 · DreamerV3 · Generic numpy/torch

world model integration

Storage Layer

Qdrant · MemoryStore

one collection per temporal epoch · in-process, no infra needed

World Model Adapters

V-JEPA 2

adapter.batch_clip_to_states(clip_output, ts, scene_id)

Meta's video joint-embedding predictive architecture

DreamerV3

adapter.rssm_to_world_state(h_t, z_t, position, ts, scene_id)

Recurrent state-space model

Generic

adapter.from_numpy(embedding, position, ts, scene_id)

Any numpy or torch embedding

Why not SpatCode or TANNS?

| Use case | Recommended |

|---|---|

| Exact 3D bounding-box range query | LOCI |

| Fuzzy "near this location" semantic search | SpatCode |

| Single-session temporal ANN, all data in one graph | TANNS |

Quick Start

Three deployment modes

In-memory for zero-infrastructure prototyping, Docker for a self-contained REST API, or directly against a Qdrant instance for production.

pip install loci-stdb

"kw">from loci "kw">import LocalLociClient, WorldState

client = LocalLociClient(vector_size=512)

state = WorldState(

x=0.5, y=0.3, z=0.8,

timestamp_ms=1000,

vector=[0.1] * 512,

scene_id="my_scene",

)

state_id = client.insert(state)

results = client.query(

vector=[0.1] * 512,

spatial_bounds={

"x_min": 0.0, "x_max": 1.0,

"y_min": 0.0, "y_max": 1.0,

"z_min": 0.0, "z_max": 1.0,

},

time_window_ms=(0, 5000),

limit=10,

)Roadmap

v0.1 → v1.0

Current release is v0.3. The path to production readiness is tracked publicly on GitHub.

WorldState data model · Hilbert curve spatial encoding (4D) · Temporal sharding · Predict-then-retrieve

AsyncLociClient + parallel fan-out · Causal chain linking · Configurable distance metrics · 70+ test suite

Adaptive Hilbert resolution · Funnel search API · Result caching · Milvus/Weaviate benchmarks

Cross-scale causal linking · Scale-aware temporal decay

gRPC transport · Authentication + multi-tenancy · OpenTelemetry + Prometheus · Helm chart